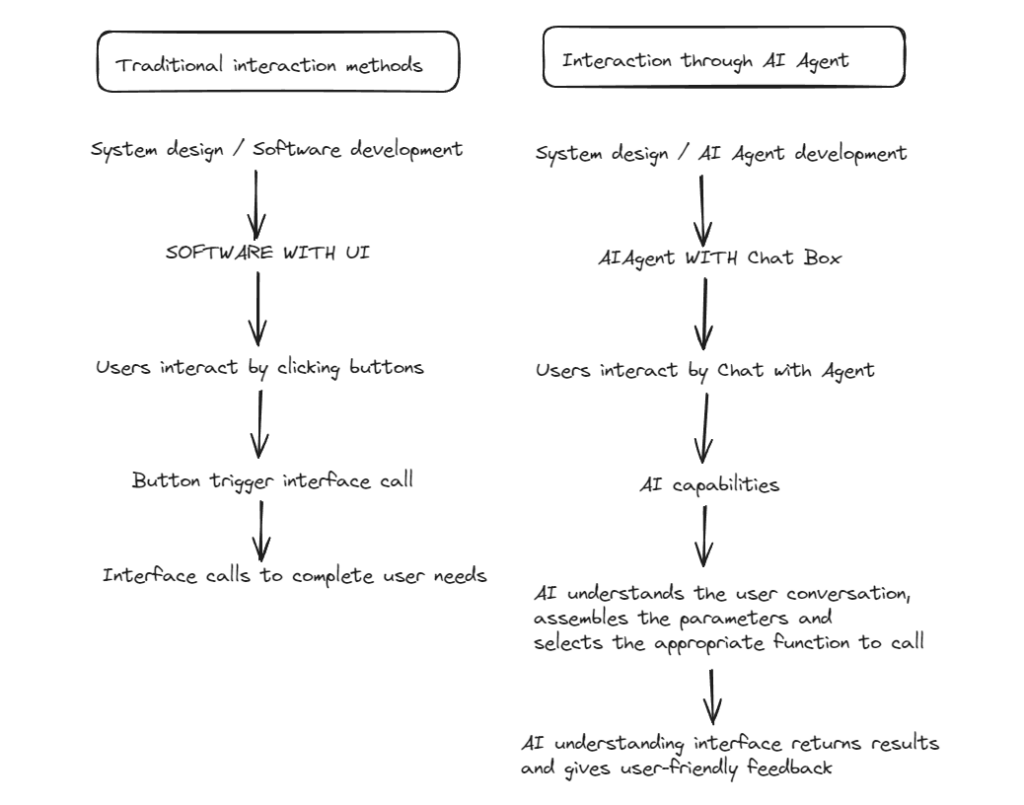

由我们对话式的生成式人工智能到我们所谓的AI Agent演进,函数式调用是当前可以看到最有机会的方式。

用户可以用日常语言来让AI决策到底使用哪个接口来完成用户的任务,也可以通过用户的对话抽离出接口参数。

可以参考:

https://platform.openai.com/docs/guides/function-calling

https://cookbook.openai.com/examples/how_to_call_functions_with_chat_model

下面我简单实现一个官方的demo,先安装python依赖

pip install openaifrom openai import OpenAI

import json

client = OpenAI(api_key="sk-*****************************")

# Example dummy function hard coded to return the same weather

# In production, this could be your backend API or an external API

def get_current_weather(location, unit="fahrenheit"):

"""Get the current weather in a given location"""

if "tokyo" in location.lower():

return json.dumps({"location": "Tokyo", "temperature": "10", "unit": unit})

elif "san francisco" in location.lower():

return json.dumps({"location": "San Francisco", "temperature": "72", "unit": unit})

elif "paris" in location.lower():

return json.dumps({"location": "Paris", "temperature": "22", "unit": unit})

else:

return json.dumps({"location": location, "temperature": "unknown"})

def run_conversation():

# Step 1: send the conversation and available functions to the model

messages = [{"role": "user", "content": "What's the weather like in San Francisco, Tokyo, and Paris?"}]

tools = [

{

"type": "function",

"function": {

"name": "get_current_weather",

"description": "Get the current weather in a given location",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g. San Francisco, CA",

},

"unit": {"type": "string", "enum": ["celsius", "fahrenheit"]},

},

"required": ["location"],

},

},

}

]

response = client.chat.completions.create(

model="gpt-3.5-turbo-0125",

messages=messages,

tools=tools,

tool_choice="auto", # auto is default, but we'll be explicit

)

response_message = response.choices[0].message

tool_calls = response_message.tool_calls

# Step 2: check if the model wanted to call a function

if tool_calls:

# Step 3: call the function

# Note: the JSON response may not always be valid; be sure to handle errors

available_functions = {

"get_current_weather": get_current_weather,

} # only one function in this example, but you can have multiple

messages.append(response_message) # extend conversation with assistant's reply

# Step 4: send the info for each function call and function response to the model

for tool_call in tool_calls:

function_name = tool_call.function.name

function_to_call = available_functions[function_name]

function_args = json.loads(tool_call.function.arguments)

function_response = function_to_call(

location=function_args.get("location"),

unit=function_args.get("unit"),

)

messages.append(

{

"tool_call_id": tool_call.id,

"role": "tool",

"name": function_name,

"content": function_response,

}

) # extend conversation with function response

second_response = client.chat.completions.create(

model="gpt-3.5-turbo-0125",

messages=messages,

) # get a new response from the model where it can see the function response

return second_response

print(run_conversation())可以看到返回

ChatCompletion(id='chatcmpl-**', choices=[Choice(finish_reason='stop', index=0, logprobs=None, message=ChatCompletionMessage(content='Currently in San Francisco, the temperature is 72°C. In Tokyo, the temperature is 10°C. And in Paris, the temperature is 22°C.', role='assistant', function_call=None, tool_calls=None))], created=1711365951, model='gpt-35-turbo', object='chat.completion', system_fingerprint='fp_2f57f81c11', usage=CompletionUsage(completion_tokens=33, prompt_tokens=169, total_tokens=202))你的openai的apikey可以在:https://platform.openai.com/api-keys获取